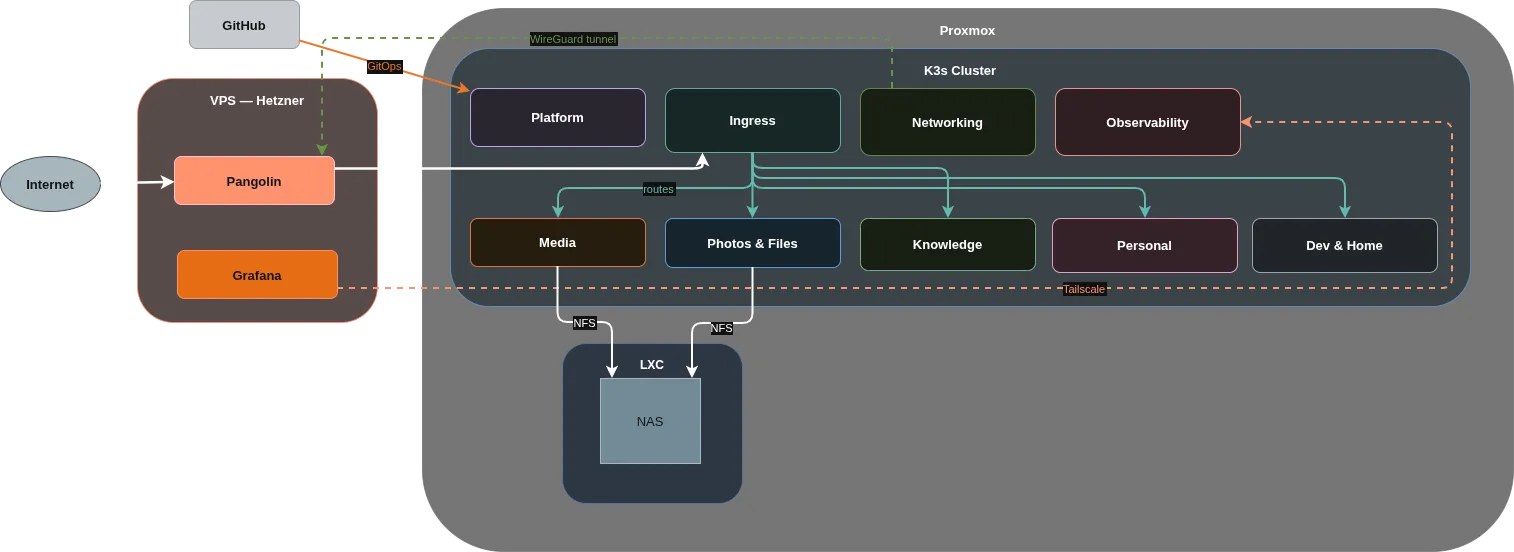

For most of last year, my homelab ran on Docker Compose. Each service had a compose file, Ansible handled the deployment, Terraform provisioned the VMs and LXC containers on Proxmox. It worked. Services were up, data was safe, I was happy.

Then I heard about GitOps, started reading about ArgoCD, and made the mistake of watching one too many conference talks about Kubernetes. Three months later I was rebuilding the whole thing from scratch.

What I had before

The setup was Terraform + Ansible + Docker Compose. Terraform provisioned infrastructure on Proxmox. Ansible managed configuration and deployed services via Docker Compose files. Straightforward, well-documented, and solid for a homelab.

The problem wasn’t that it was broken. Every time I wanted to change something (update an image, tweak a config, add a new service), I had to SSH into a server and run an Ansible playbook. If I pushed a change without committing to git first, live state and repo would quietly diverge. Nothing enforced consistency. The git repo was supposed to be the source of truth, but nothing checked that it actually matched reality.

GitOps, and why it changes things

GitOps is the idea that git is the single source of truth for your infrastructure, and the system automatically reconciles itself to match what’s in the repo. Push a change, the system applies it. Something drifts, the system fixes it. You commit instead of running playbooks or SSHing anywhere.

This sounded like what I wanted. So the question was whether I could get there with Docker Compose and Ansible.

Ansible is imperative by design. You write steps that execute in order, a procedure rather than a declaration of desired state. Getting true GitOps out of it means adding AWX, custom CI/CD pipelines, webhooks, and at that point you’re spending more time than on the infrastructure itself.

Kubernetes is declarative from the ground up. Every resource is a YAML file that says what should exist. The control plane looks at what you declared and makes reality match it, continuously. ArgoCD sits on top and watches a git repo, applying changes automatically whenever something drifts.

I also just wanted to learn Kubernetes

The second reason was that I wanted to learn Kubernetes, and running a cluster was the fastest way to do it.

Reading docs and following tutorials gets you so far. Actually running a cluster, dealing with pods that won’t start and services that can’t reach each other and ingress rules that silently do nothing, teaches you things no tutorial covers. Every problem you debug sticks. I can read a Kubernetes event log now without squinting at it, which I couldn’t when I started.

My homelab is where I’m allowed to break things. If Navidrome goes down for an afternoon while I’m debugging a misconfigured IngressRoute, nobody cares.

What I actually gained

Self-healing is real. When a container crashes, Kubernetes restarts it with exponential backoff. Docker Compose’s restart: unless-stopped does something similar, but they behave differently under sustained failures.

Rolling deployments mean a new version comes up before the old one goes down. With Docker Compose you recreate the container and have a gap. For most homelab services that gap doesn’t matter much, but getting in the habit of thinking about it does.

Secret management got a lot cleaner. The External Secrets Operator pulls secrets from Bitwarden into the cluster as Kubernetes Secret objects and injects them into pods as environment variables. Before this I was managing encrypted Ansible vault files by hand, periodically losing track of which version was current.

The ecosystem is useful too. Prometheus, Loki, Tempo, Cert-Manager, Traefik all ship Helm charts that assume Kubernetes. The setup path for observability and ingress is more direct than it used to be.

And portability, which I didn’t expect to care about: the manifests I write for k3s at home are essentially the same ones that run on EKS, GKE, or AKS. Hours spent on this transfer to what I do at work.

What it cost me

Kubernetes is significantly more complex than Docker Compose. More layers of abstraction, more things that fail silently, more concepts you need to understand before anything makes sense.

Debugging a Docker Compose issue is usually quick: check the logs, fix the env var, restart the container. Debugging something in Kubernetes means checking pods, then events, then the ingress, then the service, then the network policy, then wondering whether it’s a DNS issue inside the cluster. It does get easier. The early weeks are rough.

Resource overhead is real too. I’m running three VMs to host a cluster that serves one household. That’s more RAM and more moving parts than a single Docker host needs. For most homelab use cases it’s overkill (and for mine too probably).

Was it worth it?

Yes, though not for the reasons I thought going in.

I expected cleaner operations and better reliability, but the main positive point is that Kubernetes forces you to understand it because there’s not really a way to overpower your way through a broken IngressRoute or a pod that can’t pull its secrets. I had to figure out service discovery and ingress rules and secrets injection from scratch, reading events and docs and occasionally very long GitHub issues.